Introduction

The current LLM unit economics (Large Language Models) present a paradoxical financial dynamic: the smarter the models get, the more money they burn. At the heart of the AI revolution is a machine that consumes capital at a dizzying pace, raising doubts about long-term sustainability. However, internal projections from giants like OpenAI and Anthropic suggest there is light at the end of the tunnel. But how do we get from billion-dollar losses to structural profits?

Context: Robonomics Analysis

This article reworks and expands on data presented in an original analysis published on Robonomics. The source highlights how the business model of frontier models is currently characterized by negative cash flows, due to training costs growing much faster than revenues. The original analysis suggests that profitability will only arrive when the exponential growth of compute costs slows down, a turning point that companies are already planning for.

The Problem: A Cash-Burning Machine

The heart of the problem lies in compute costs, particularly for training. According to current estimates, training costs increase by about 5x every year to keep up with scaling laws, while revenues generated by these models tend to double (2x). This creates a structural deficit in LLM unit economics.

Let's imagine a simplified scenario:

- Year 1: Cost 1, Future Revenue 2.

- Year 2: Revenue arrives (+2), but training the next model costs 5. Net result: -3.

- Year 3: Revenue rises to 10, but the new model cost skyrockets to 25. Net result: -15.

Currently, frontier models are snowballs accumulating losses: each generation costs drastically more than the previous one.

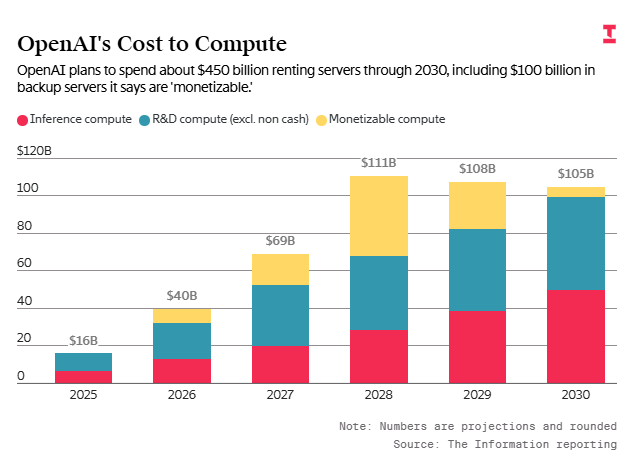

Estimated compute costs for OpenAI.

The Solutions: OpenAI vs Anthropic

To reverse this trend and turn LLM unit economics positive, cost growth must slow (below 2x annually) or revenues must explode. Leaked financial projections show two distinct approaches.

OpenAI's Bet

OpenAI's plans seem to assume that total compute capacity will stop growing exponentially after 2028. This reflects the scenario where training costs flatten, allowing margins to emerge. It's a bet on physical or economic limits: you can't simply build a model 5 times bigger forever without running out of chips or energy.

Anthropic's Approach

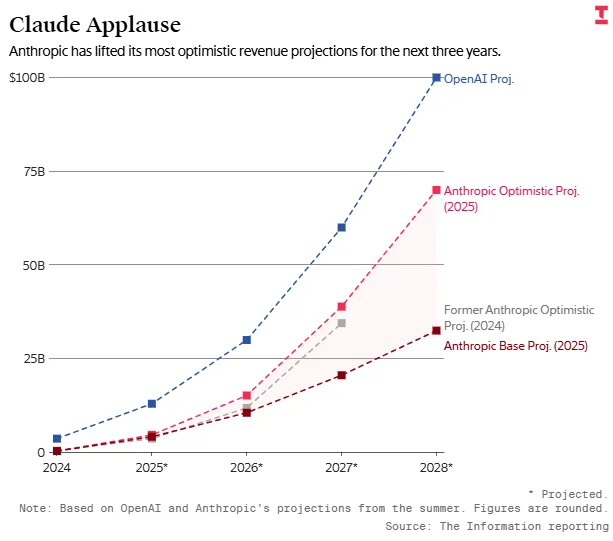

Anthropic takes a different view. They predict that the ROI (Return on Investment) per model will increase over time (spend 1, get 5) and that compute spend growth will be more muted compared to OpenAI (3x vs 8x in the FY25-FY28 period).

Revenue growth projections.

Expected earnings comparison: OpenAI vs Anthropic.

The Netflix Analogy

An interesting parallel is Netflix. For years, Netflix had negative cash flows, investing massively in content creation. The turnaround came when content spend growth slowed (partly due to COVID), while the subscriber base was established. Similarly, AI companies won't stop investing, but they will stop growing investment by 10x every year. When the cost curve flattens, margins explode.

Conclusion

LLM economics is a bet on the future. Currently, it's a capital furnace, but economic laws suggest this phase is transitory. Whether due to physical limits or a deliberate saturation strategy, the moment training costs stop growing exponentially will mark the beginning of true profitability for generative AI.

FAQ

Why is LLM unit economics currently losing money?

Because training costs (compute) grow much faster (5x) than the revenues generated by the models (2x).

When will AI models like GPT become profitable?

Profitability is expected when training cost growth slows or stops, allowing revenues to overtake expenses, likely around 2028 for OpenAI.

What is the difference between OpenAI's and Anthropic's strategy?

OpenAI bets on a slowdown in compute capacity growth after 2028; Anthropic bets on increased ROI per model and more contained cost growth.

Is AI an economic bubble?

Not necessarily. Similar to Netflix, it requires huge upfront investments in "content" (models) that generate profits only when growth spending stabilizes.